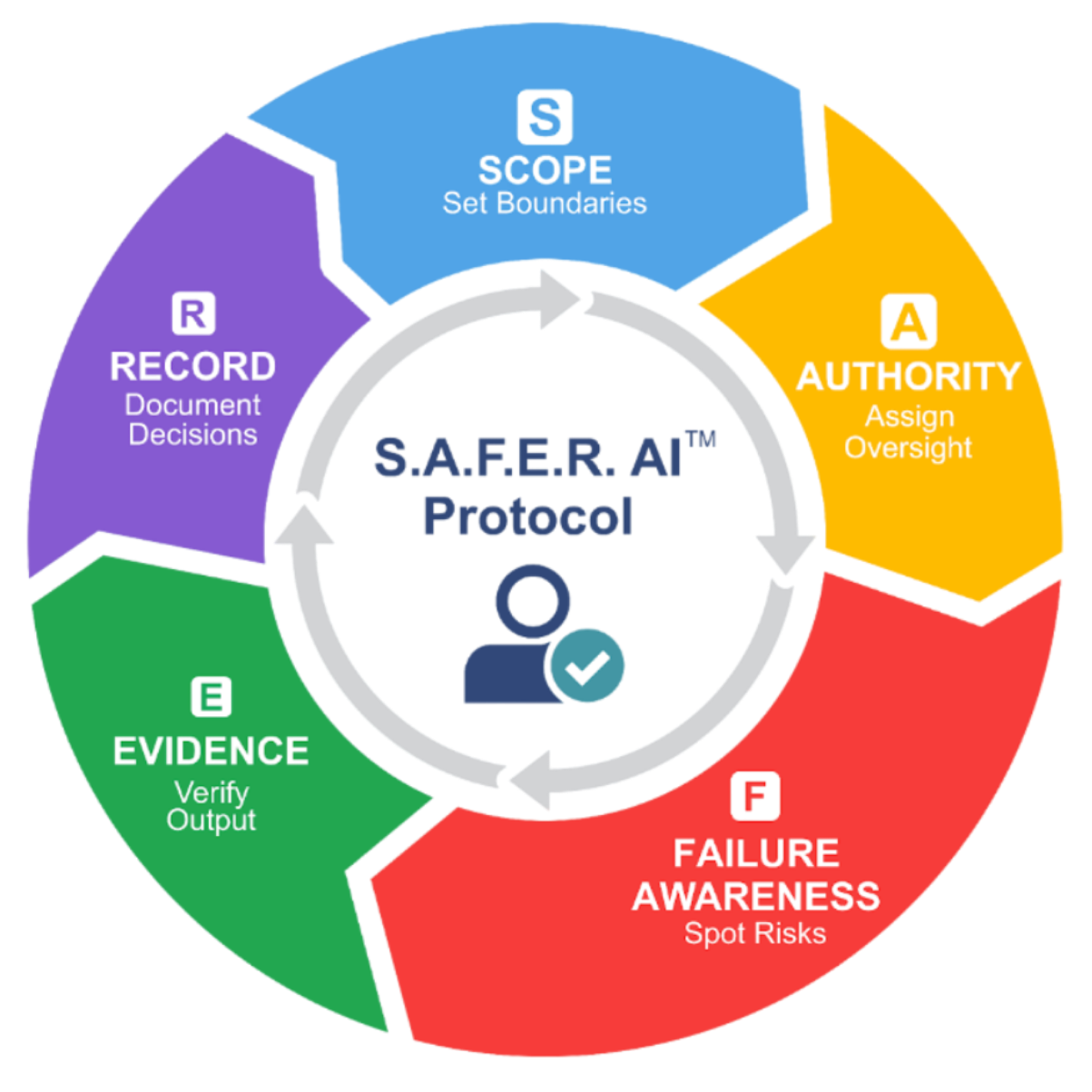

The SAFER AI Protocol

Artificial intelligence systems are increasingly used to generate insights, recommendations, and decisions across organizations and institutions. While AI technologies can be powerful tools, responsibility for evaluating and acting on AI-generated outputs ultimately remains with humans.

The SAFER AI Protocol introduces a conceptual framework to encourage structured, responsible evaluation of AI-generated information before it influences decisions.

SAFER AI Framework

The SAFER AI Protocol is represented as a cyclical model that encourages thoughtful human evaluation when interacting with artificial intelligence systems.

The framework highlights five key considerations that support responsible interaction with AI-generated outputs:

Understanding the context and boundaries of the AI task.

Clarifying who is responsible for evaluating and approving AI output.

Recognizing potential consequences if the AI output is incorrect.

Seeking verification or supporting information before relying on AI outputs.

Documenting the reasoning and evaluation behind decisions involving AI.

Why Human Oversight Matters

As AI systems become integrated into everyday work and decision-making, individuals and organizations must consider how AI-generated information is evaluated before it influences actions. The SAFER AI Protocol highlights the importance of disciplined human judgment in AI-assisted environments.

About the Framework

The SAFER AI Protocol is a conceptual framework developed to support discussion and research on responsible artificial intelligence governance and human-centered AI interaction.

Intellectual Property Notice

The SAFER AI Protocol and associated visual model were developed by Dr. Lola Longe. The SAFER AI name and visual framework are protected intellectual property.

Whether you’re strengthening faculty skills, preparing students, or equipping executives, our literacy programs provide the tools you need to lead responsibly.

How Dr. Longe Inspires Change

“Dr. Longe’s ability to connect academic research with practical solutions is unmatched. Our faculty left her workshop energized and equipped with real tools.”

“Her keynote at our conference was thought-provoking and inspiring. Dr. Longe makes AI approachable without losing depth or rigor.”

“We invited Dr. Longe to consult on our AI policy. She balanced ethics, strategy, and innovation in a way that resonated across our leadership team.”

“Dr. Longe guided us through AI adoption with clarity and empathy. She understood our challenges and helped us build a roadmap we could trust.”

“Her ability to simplify complex AI and blockchain concepts is a rare gift. Students left her lecture with both knowledge and confidence.”

“Dr. Longe’s workshop for our executive team was a turning point. She helped us see where AI adds value and where caution is needed.”

“She doesn’t just lecture — she engages. Dr. Longe challenged our assumptions and sparked meaningful discussions across our organization.”

“Brilliant, practical, and ethical — Dr. Longe brings all three to every session. Our MBA students rated her module as the most impactful of the year.”